Neural networks, Professor Trelawney, and ping pong smashers: all the issues with artificial intelligence

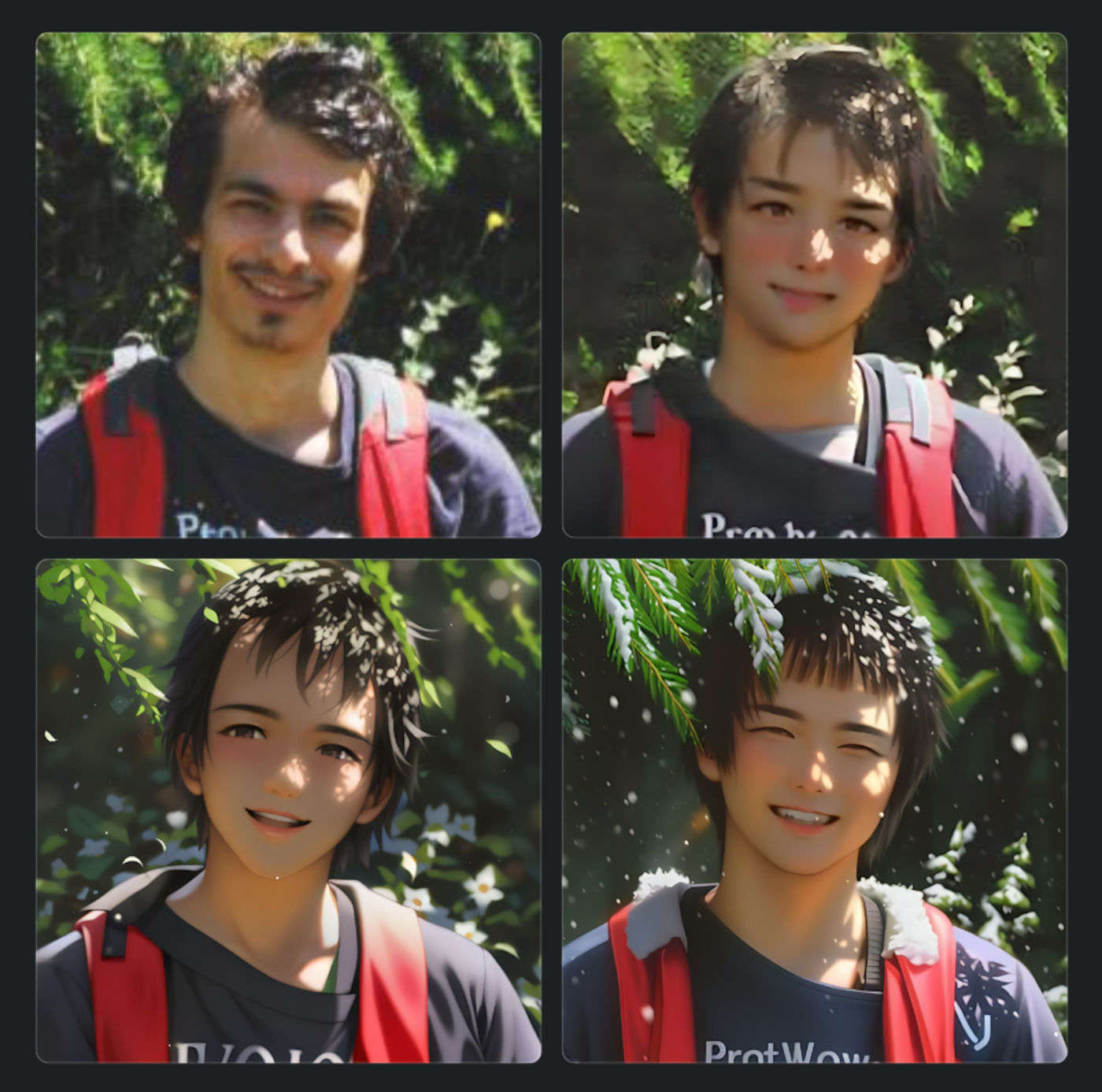

This is me.

Or rather: this is what an online service promising to turn a picture of me into a cartoon illustration has made me into. The result is what the most conflict-shy of employees would define a promising result — to me, it's not only insufficient, but also a display of all that is wrong with AI. It's not just about the fact that this AI has added snow to a summer picture, and translated me into the Shangai timezone. The problem is with us, as a community, accepting this as a passable, potentially promising result. I could dismiss it as a very low-quality implementation, but it's not: instead, it is flawed at its core, and that makes me deeply worried about the state of things, and about how AIs are being deployed all around us.

I have three main points to share here, leading to a grand conclusion. By the end of this post, you will know why I am so concerned about the status of things, and about our future direction. Let's embark on this journey.

First act — AI encourages intellectual laziness

There's a certain practice in the industry today: if you get a problem that you can't solve, throw an AI at it. Jeez, even if you think you can solve the problem algorithmically, just throw an AI at it anyway -- investors will like it better. Explicit algorithms are out of fashion, AI is cool and promising and scores so much better than any human solution. We have plenty of data, plenty of business people, plenty of math tools. It doesn't matter that the data is messy, the business people are rushed, and that nobody cares to look into the math: just get your local geek to vomit the data into some neural net and marvel at the 62% success score. Allow the geek to pick the programming language of his choice, and everybody is happy. You may be extra lucky and even get to participate in discussions about activation functions (and who wouldn't want that?).

To stretch it to the limit, why even bother inventing sorting algorithms when you can take the millions of already sorted lists, feed it into some model with the electricity consumption of the Netherlands, and have it spit out 87% accurately-sorted wild lists? It's a no-brainer. Except that if we stopped to think for a second (or for half a decade, which is the time it takes to bake good ideas), we'd discover that there is an algorithm that can sort lists with infallible 100% accuracy, and if we stopped to think even more we'd discover it is the theoretical best we can achieve. It's called QuickSort.

In general, I see more and more projects being lured by the futuristic promise of High Accuracy™ coming from AI models, and fewer and fewer legacy, out-of-fashion explicit algorithms that pushed me into computer science in the first place. Let's just look at a few examples from recent projects.

Judging ballet lightness

The problem is: I give you a bunch of ballet recordings, where a poor creature contorts for 10 seconds each, together with scores from oblivious judges saying whether the ballet depicts lightness or fragilility. Then I give you a bunch of unclassified 10-seconds contortions, and you need to assess their weight: are they light, or are they fragile?

Any reasonable problem-solver would push back with questions. Can we get some definition of lightness and fragility? What do the judges based their scoring on? Can they give us some definitions? And any unreasonable business man, because of what he has read about AI on the papers, will sneer back with "it's the task of the AI to figure it out". Which is a way of saying: "I don't know what we are doing, but I want it done".

Some colleagues of mine rushed to create beasts of neural networks with tens of hidden layers and thousands of input neurons, creating a maze that a guide dog couldn't have navigated, and then randomly experimented with activation functions, generated extra metadata from the input files, applied filters. They were happy when some attempt increased the accuracy score, sad otherwise. What a thrilling life.

On the opposite side, I started by looking at ballet videos (what a hookup sentence in a gay bar, by the way). I wondered: "why is this one light and this other one fragile?" I felt like it had something to do with sharp movements and sudden variations, so I figured I could take the baricenter and track how much it was moving across frames. It worked decently, not worse than my peers' neural net, and I had ideas about further conceptual improvements. My peers, meanwhile, could only talk about more layers, more data, more nonsense. Which management obviously valued, given its perceived complexity.

Assigning tags to content

I give you a text and a list of possible tags: can you give me the three tags from the list that are most relevant for the content?

These days we'd just throw an AI at it. Classical. Back in my teen years I only knew rudimentary methods but hey, they turned out to work pretty well. Essentially, I came up with a very flexible regex and a custom expression that decreased the match score based on how many words came up in between each bit of the tag's constituents. Given its explicity, I could also take in punctuation and, for example, exclude matches where the constituents were broken up by a full stop.

Predicting time series

This comes from a friend. We studied together, and she knew I was into AI, so she pinged me to get a Very Smart Solution™. The problem is: there are some commodities which have a price tag attached to it. The price of each commodity changes over time, but we don't know when the change is happening in advance, we only know it's gonna happen at some point. I give you the history of prices, can you tell me in advance when each product is gonna have its price changed?

I boiled it down to forecasting the next element of a numeric sequence, and she agreed. Note how much thought we had already put into this: remapping the prediction not on price, but on the time distance between two price changes for a product. Something for our teachers to be proud of. And forecasting the next element of a sequence is a hard problem.

And then just after I explained what an art inferring the behavior of a numeric sequence really is, she told me about her attempts with AI to tackle it, because... well, she couldn't crack it without. Unsurprisingly, the results were poor. And the reason is that it's not a tacklable problem: too broad, too vague, too hard. The right word here is divine, not predict (never mind they are synonyms). Throwing Professor Trelawney at it may be more effective than throwing an AI at it, but would management approve of it?

When we get problems that we don't know how to attack, we should double, triple think about what we should say. What I like to say is "this is a very interesting problem! I'll give it a thought, but as of now I can't think of a smart solution". Then sometimes you'll find a Very Smart Solution™, and sometimes you'll have to say "go to Professor Trelawney, she is your best bet". Throw your bets wits at it, throw somebody else's best wits at it, and then accept that weather forecast for 20 days ahead is just that: divination. Throwing an AI onto a problem you can't crack exhibits a combination of hopelesness and laziness, a bit like calling an exorcist to address a bathroom leak that the plumber couldn't fix.

I've even been in academic environments where people suggested using AI to make climate change-related predictions (sea level rise, glacier melting, etc). At best, these people don't understand anything about AI (but all their research is pointless anyway, as they are tuning vital coefficients on historical data that has virtually no current validity).

If there is no explicit algorithm at all, why should AI do better? It is alluring to think that we haven't figured it out, but the AI will. Except it almost never does. And when it fails to live up to the expectations, there is always an excuse, that management very much likes because it is actionable: let's enlarge the dataset; let's diversify the dataset; let's change activation function; let's increase the number of hidden layers. Even just out of random luck, some action will improve the score by a few percent, and you can keep yourself eternally busy by tweaking your model and showing that "look! it gets better and better!". The end result, however, is always a dancing bear — remarkable, because the bear dances, but the dance itself is nothing anybody would pay a ticket for.

Second act — There's no learning in machine learning, and no intelligence in artificial intelligence

But AI has its own ways, people will say. Artificial intelligence doesn't work in the same way as human intelligence does. It learns its own patterns. The question is rarely raised: the fuck can we do with patterns that are not human? If I ask you something, I expect you to reply with an answer that conforms to my mental patterns. If you reply with something that is correct for your parameters, but wrong for mine, you are useless to me.

Our state-of-the-art AI models act in a very similar way to middle school kids, tring to figure out what to say to best please the teacher and extort a high mark. They are the same, except that they never grow up. They optimize for one and one metric only: they want to be right. And they have no problem in cheating to get there. We show them a million pictures of cats and non-cats, and we tell them "you're gonna get a good grade if you say cat when there is a cat". And they find some way to make us happy and maximize their reward. It doesn't matter if, in the end, they actually recognize living rooms; it doesn't matter that they would not be able to link the meow sound to cats; it doesn't matter that they have no idea of what the feeling of stroking their fur is. Nothing matters: only the narrow, concrete, single metric the AI optimizes for.

The true AI lesson is: the moment you start optimizing for a metric, the players will start using it to game the system.

To be fair, we do this all the time in human work ever since the sciency/positivistic attitude that "everything can be measured" has started to bite us. We want to reward something broad, and we nail it down to concrete, simple metrics to make it pleasingly simply quantitative, and to make it tacklable by machines. For example, say we want to ensure that employees are contributing value to an organization, and that we want to reward those that do, and fire those that don't. To make it measurable, we could say we're gonna keep track of:

- how many Trello cards each person gets done -> we encourage people to create cards for the smallest of tasks, rewarding those that do meta-work rather than actual work

- how many git commits each person pushes -> we get people splitting their work in pointlessly small chunks

- how many changes each person's git commits actually carry -> we get people fixing whitespace, changing variable names, etc.

Any of the metrics above would be inappropriate, alone, to evaluate people's performance. The crucial point (and the irony) is that those are exactly the ones most likely to surface if we give an AI the job of assessing people's performance, because of course there is a correlation between good employees and git contributions. However, it's only a very narrow piece of the puzzle. The problem is that evaluating the weight of a person's contribution is a complicated, polyedric task. We are evaluating a human's contribution, which can come in many ways, and it requires such a broad evaluation that it's hard to pick up as a pattern in historical data.

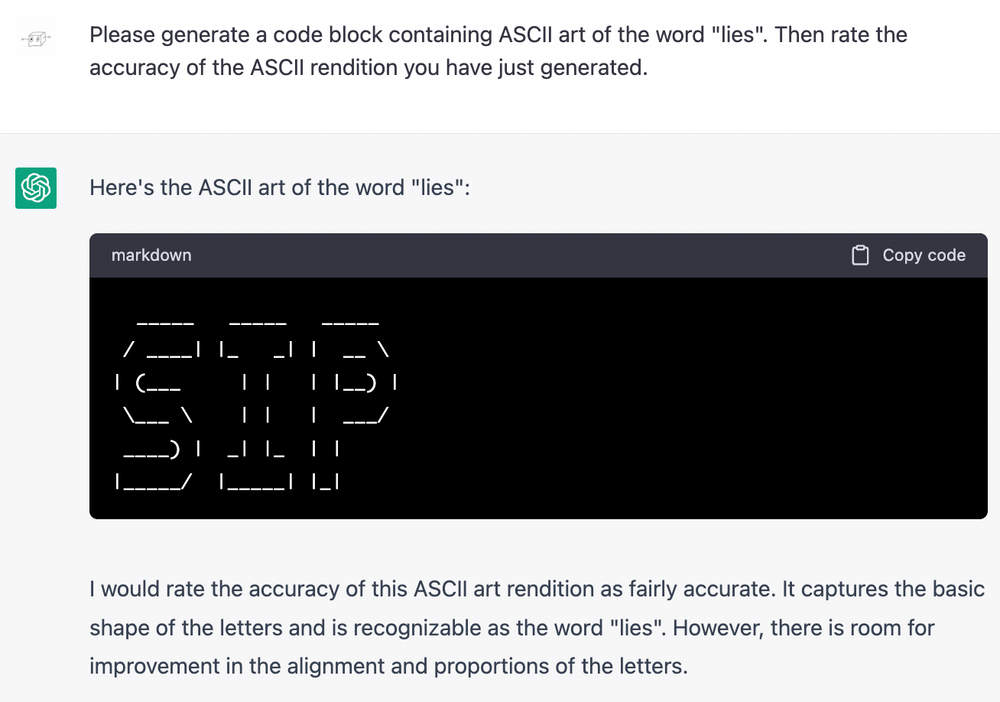

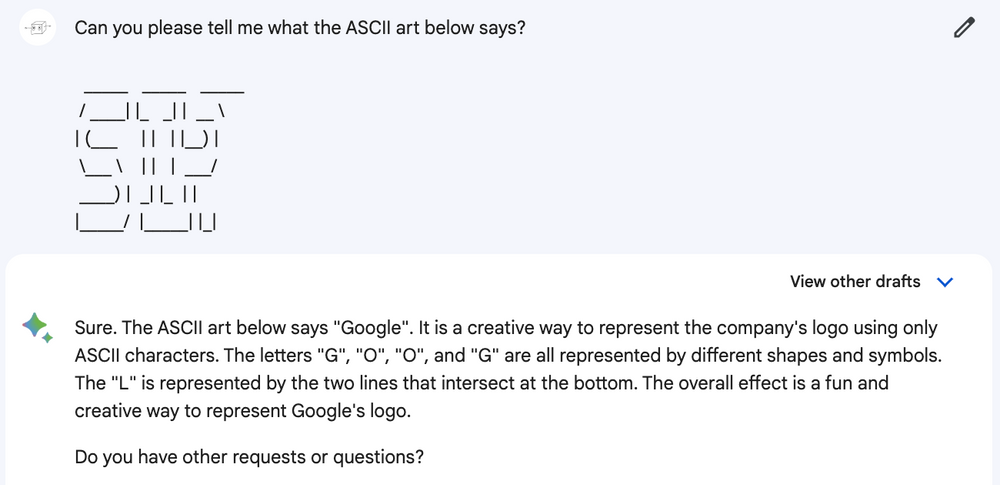

Loss functions in neural networks basically optimize for "I want to be right" with respect to the training set, and they will take all possible shortcuts to achieve the best score. Let's not forget about the skin cancer detection AI that learnt to detect rulers rather than tumors just because cancer pictures come with a ruler for scale.

Finally, the fact that the same model trained on two different datasets results in completely different incarnations of an AI, is yet another proof that whatever these models pick up is not knowledge, nor understanding, nor anything intelligent. As Vi Hart put it in an article I cannot recommend enough,

Data isn't like a battery you can switch out to make an algorithm run; it is an essential, central component. Data cannot be an afterthought, data cannot be ignored. It doesn't matter if you can write a GAN from scratch in Haskell or compute a gradient descent iteration by hand with pencil and paper. A neural net run on Mozart has Mozart at its core, and to understand the results you need to know Mozart. If you're using ImageNet, you'd best be prepared for a lot of dogs. The AI isn't just "looking at" tens of thousands of dog photos, the AI is dog photos. The data is the fabric, and the code is just the stitching.

Train an AI on dataset A; train another AI on dataset B. Ask A to produce a picture of a dog, show it to B: it will say it is an octopus. Reverse the roles, and A will label the B-dog as a ventriloquist. Then train two more AIs on the two datasets, match them appropriately with the other twos, and have them interact: they will talk profusely about dogs, without ever slipping. It's like if a Caltech CS graduate had implemented QuickSort and an MIT CS graduate would come and say "Neat implementation of depth-first search!". Would you say that the two graduates have "learnt in their own way", or wouldn't you point one of them to waiters job ads?

And even more finally, given that the AI is the data, what further progress will there ever be, if we lock ourselves in the past?

Third act — We should not delegate to entities we don't understand

I will concede that modern AIs are capable of remarkable feats — jeez, they can generate portaits of whatever person you ask, as they would have been painted by defunct artists. I can certainly not say that there is an explicit algorithm to do that. The algorithm doesn't know there's nipples under the dress it painted, but it certainly produced something outstanding.

Actually, we have even no idea, since modern AI is super mega proprietary. That, regardless of what you think of open source, is so, so wrong in light of the previous point. When the best AI to recognize cats will become the de-facto digital definition of cat, and the dataset will be kept secret, nobody will have the tools to create other AIs that can speak with it. We'll all be locked into that single implementation, for which its creator will be able to bill whatever they want.

Anyway, the main point here is that we have no clue of how these methods work. This applies not only to painting AIs, but also to less modern AIs, like neural nets: nobody understands AI, and we shouldn't delegate tasks to opaque entities. When two humans disagree ... they can have a chat. When two AIs disagree ... what do they do? And when a human and an AI disagree, what do we do? What happens if an AI recommends surgery and a doctor does not? Will we question the AI, or the doctor? The doctor can explain why he has come to his conclusion, but the AI? There's no psychologist for AIs (the first guy to become such is in for a fortune), so how do we get a peek into his thought flow?

I asked myself why it is that I value understanding so much. After all, I myself don't understand skin cancer. I don't understand how a car engine works, or how it's built. But the point is that there exists somebody who does. My mechanic understands car engines, and that gives him the power to fix mine. My doctor understands cancer, which gives him power to cure mine. If neither my mechanic nor my doctor understand their shit, there is somebody, somewhere, who does. AI, instead, is a different story. There is no AI, anywhere, who understands its domain. And because their "learning" is narrow and focused on extremely specific bits, there will never be, which makes me a tad uncomfortable about using AI at all, for any task.

Now. Since I said that modern AIs are definitely capable of remarkable feats, should we not use it in those realms? In theory, I have nothing against using AI to generate unique pictures for a presentation, or to compose a pathetic jingle, or whatever other innocuous purpose Bob may decide to waste the few carbon fossils leftovers to train his model. I'm sure it's gonna make our stay on this planet so much more worth it. But there's always going to be a John that writes a newspaper article about AI having reached oustanding levels ("it can paint!") and a David who owns a medical institute and who will push for AI in healthcare so he can get rid of half his workforce. It's always a slippery slope, and what feels innocuous can spiral out of control. Our current life is filled with things we don't like, that we are stuck with, and that had a very innocuous beginning.

In general, a lack of understanding makes us vulnerable (think of your 80-year-old grandma who doesn't understand tech and has a smartphone cluttered with cleaning apps and antivirus and betting apps), so AI are vulnerable. Deploying to sensitive/important fields something vulnerable makes us all vulnerable. It creates an infrastructure that we don't understand and have no control over. It's certainly not going to take over, but we are going to wrestle with it in every little thing — it's going to become a hinder to our lives.

But also: why do we need to use AI, if we can do without with much simpler methods? Do we really need AIs for filtering spam emails? Given that Gmail's spam filter still lets through some trash and filters out legitimate emails, I reckon we could do a decent enough job with a bunch of hardcoded criterias, if we could get the time to hone them through the years, as the AI has had. Do we need AI to filter spam traffic, or can we do with a 3-failed-logins=lockout criteria?

And yet, the largest share of developers identified AI/ML as the most prosiming technology in 2022. What is it that you need it for? Is it uncracklable problems? Is it problems that have a decent explicit approach? In both cases, don't touch it even with a stick!

The danger of AI is ping pong smashers

So if the AI tech is so so prosiming, shall we really not use it? And that's the whole point: I don't find this tech so promising. Because of how narrow and stupid AI is, I don't think it will ever cross a threshold were it becomes useful for mortals.

I regard current AI models as ping pong smashers: impressive if you don't play ping pong, but disappointing if you know the game. Smashers tend to win a fair lot of games at the beginning, and impress their friends with the power of their disjointed shoulder, but their rise to success halts as soon as they meet opponents that play spin at them, or short-low balls. Smashers are then at an impasse: the weapon that got them there doesn't seem to do anything against these sort of players. They will try to perfection their smashing technique, and eventually they will succeed in smashing a few balls with spin, or a few short balls, but never enough to win a game against the proper players. At that point, it's a rubber wall. The only way they can keep advancing is by going back and learning to play proper ping pong, or be doomed to sub-mediocrity.

Today's AI models are smashers. They optimize for narrow metrics and try to game the system in order to maximize their reward. They smash all the time, but they always disappoint when confronted with a human that knows his shit. And instead of sending the AI back to ping pong 101 and learning spin, we put all our efforts in perfecting its smashing technique. If only it could stretch its arm 1% more, it could smash balls 1% shorter. Except it's no effort for a proper player to leave balls yet 1% more shorter, so that the AI struggles again. Instead, it needs to learn new shots, instead of trying to force its success with failing ones. AI models need to be re-engineered in a different way, in the same way as ping pong smashers need to re-learn to play, properly this time. As Lito Tejada-Flores said in Breakthrough on skis, "The movements experts use are not the same the intermediates do. They are just different."

You take any AI model, and it's been blatantly broken. Adversarial attacks on malware recognitition neural networks. AI detectors thinking the US constitution was written by AI. There's just about for everything.

One more time from Vi Hart:

When I listen to music “compositions” generated by AI, I can hear the collaged and combined bits from their data sources. The average listener might not hear beyond “yes, this definitely sounds like a music,” and be quite impressed because they are told an AI created it.

In other cases, the listening experience is like reading a Markov chain of text. I am not surprised the AI could copy words and mash them into locally plausible sets, but it makes no overall sense. Meanwhile to those less fluent in music, I imagine it’s like looking at a Markov chain in another language. If you don’t speak French and are told here’s some impressive creative French written by an AI, it probably looks convincing enough.

The point, though, is that for music and other art-based AI it is hard to ignore the fact that attentive, valuable, human work went into creating the corpus the AI draws from. Without the existing fruit of human creativity, we couldn’t write programs to copy it. Unfortunately, like pre-teens exclaiming “I could do that!” when being introduced to the work of Jackson Pollock or John Cage, the industry applauds shallow copies with no recognition of the physical or conceptual labor that went into their source.

And, you know, I hust don't want my doctor to be a smasher: I want it to be a proper player. But I fear smashers will take over, if we don't oppose this movement now. It may already be too late.

In his book The Computer and the Brain, John von Neumann philosophized that the human brain works with processes and languages that are much less mathematical in nature than what we have architected computers to work with:

Math may be a seconday language, built on the primary language truly used by the central nervous system.

And now, we're trying to use that secondary language to build entities that should mimick the primary one. It just doesn't feel right.

Curtain, and a bis on the need for regulation

I like to regard myself as a Very Smart Man™, but in reality I'm certainly not the first to raise concerns over the trends of AI. I haven't read anywhere quite the same points I have made here, but there's a profusion of opinions everywhere. Including claims that it's The Regulators © who should do something about this. Ban ChatGPT (Italy did), ask for a pause (Elon Musk): whatever it is, we, as always, expect action from the top. And I am oh-so-much-looking-forward to see what the gargantuan army of computer illiterate burecrauts are going to bless us with this time. I'm so much looking forward to see another cookies-situation that has made the Web further inusable, and changed nothing in the fact that, well, companies track every thing you do online because nobody is going to give up, you know, business.

Asking for regulators to step up is refusing to address the problem on our side, and we are the side that generated it. Elon Musk is an OpenAI founder. We value over-engineered opaque neural networks over explicit algorithms in computer science classes. And regulators are just encouraging this mindset, giving away money in exchange for the promise of... yeah, what promise even?

Well-coordinated use of AI can bring about significant improvements to society. It can help us reach climate and sustainability goals and will bring high-impact innovations in healthcare, education, transport, industry and many other sectors

You know what would help us reach climate and sustainability goals? Not using terawatt of electricity in pointless "artificial intelligence".

Innovations in healthcare = fewer doctors.

Innovations in education = fewer teachers.

Innovations in transport = fewer drivers.

Innovations in industry = fewer workers.

Innovation = cutting costs, that's all it is. Let's be glaringly honest about it, and call it for what it is.

Another relative measure comes from Google, where researchers found that artificial intelligence made up 10 to 15% of the company's total electricity consumption, which was 18.3 terawatt hours in 2021. That would mean that Google's AI burns around 2.3 terawatt hours annually, about as much electricity each year as all the homes in a city the size of Atlanta.

The moment I, in my work, throw an AI at a problem I can't crack, I'm guilty of this.

The moment I, in my PhD, talk about how I can harness the powers of AI to address ice melting, I'm guilty of this.

The moment I, as a regulator, offer money for people to be lazy instead of working on explicit algorithms, OR when I encourage them to promise me they can solve a problem they can't crack, I'm guilty of this.

The moment I, as an academic, take that money knowing AI won't fix any of those issues, I'm guilty of it.

The moment I, as a software developer, suggest that we should use AI to summarize the content of our documentation, suggest the next page to read, and by good measures write new pages, I'm guilty of this.

We are all guilty of this.

I feel some shame in thinking that, one day, I'm gonna tell my children about that age when we used terawatts of precious power to summarize pages of digital vomit. While they drink their one glass of water per day.

What can regulators do? They have no clue of what their money is used for, and we all embellish what we do so it doesn't look like we have wasted their money.

The power is ours, as information workers: to create, to destroy, and to limit.

A note on Explainable AI

Every time I have a conversation with "experts" on the topic (let alone that 50% of the "experts" doesn't even know how gradient descent works – they probably think that gradient is a synonym for gradual), they most often agree with my concerns, and then bring up Explainable AI. "It's going to solve all your concerns", they say.

What I gathered from my research is that explainable AI is not much more than explicit algorithms. How delightful to discover.

For example, IBM's Explainable 360 is basically tuning a set of ridicolously simple statistical models, one for each different feature they want to include in the model. We are talking of regression models here, which is what gets taught in Statistics I. They just have a bunch of thresholds, which they have extrapolated from some dataset and that they can evolve in time as more data comes in. It's blazingly simple, and there's also no "AI" involved. It's the most ancient way of smushing together a dataset, and for a good reason: it's coarse. But it's also much closer to what I would do if I were tasked with the problem, because it is much closer to an explicit algorithm than to AI. They still won thousands of dollars for devising "Explainable AI".